Phocoustic’s research library is organized around the State Conformance Framework (SCF): a deterministic measurement architecture for verifying whether a surface remains within validated reference conditions.

SCF outputs a structured conformance vector v(t) (position in conformance space), not a single anomaly score. Downstream evaluation of trajectory and envelope proximity is performed by the State Convergence Engine (SCE).

Modern thin-film inspection systems face a fundamental challenge: reliably determining whether a surface remains in its intended physical state. In manufacturing, cleaning validation, semiconductor processing, and materials research, the question is not merely whether something appears “unusual,” but whether the observed scattering field conforms to a defined, expected physical condition.

Thin films and surface perturbations often manifest as subtle changes in optical scattering behavior. These changes may include localized deposition rings, distributed haze, boundary-layer gradients, or directional stress-induced drift. Traditional inspection approaches frequently struggle to distinguish meaningful structural deviation from lighting variation, sensor noise, or benign texture differences. As a result, detection may be inconsistent, over-sensitive, or dependent on large training datasets.

The core problem, therefore, is not anomaly identification in the statistical sense—it is deterministic verification of state conformance under physically grounded criteria.

Most contemporary vision systems are framed as anomaly detection engines. They learn distributions of “normal” from historical datasets and flag deviations probabilistically. While powerful in certain contexts, this approach presents several limitations in thin-film and structured-surface domains:

Training Dependence: Performance depends on representative training data. Subtle surface states not seen during training may be misclassified.

Probabilistic Output: Results are typically confidence scores rather than physically interpretable deviation metrics.

Opacity: Deep models often lack explainability, making root-cause analysis difficult.

Baseline Ambiguity: “Normal” is statistically inferred rather than physically defined.

In high-precision environments—such as wafer inspection, coating validation, or process verification—engineers require measurement repeatability, calibration stability, and traceable deviation metrics. A black-box anomaly score is insufficient when compliance, tolerance thresholds, and process accountability are required.

This work introduces a deterministic State Conformance architecture built on three physically anchored principles:

Drift Quantification – Measure deviation relative to a defined, captured reference state.

Topological Localization – Identify where deviation occurs within structured spatial partitions.

Directional Coherence Assessment – Determine whether deviation exhibits physically meaningful structure.

Rather than asking, “Is this unusual?”, the system asks:

Does the observed scattering field conform to the defined baseline?

Where does deviation emerge?

Is the deviation structurally organized or stochastic?

Is deviation persisting, stabilizing, or accelerating?

This reframing shifts the epistemological stance from probabilistic anomaly inference to measurable state verification.

STRT partitions the observed surface into structured spatial tiles and evaluates each tile relative to a defined reference state. It provides:

Geographic localization of deviation

Quantification of magnitude per region

Boundary and cluster mapping

Topological distribution analysis

STRT transforms deviation from an abstract scalar into a spatially accountable structure.

Once STRT identifies regions of deviation, DIF characterizes their internal structure. It evaluates:

Vector orientation coherence

Drift direction stability

Reinforcement versus stochastic behavior

Energy gradient alignment

Physically meaningful state change typically produces directional coherence; transient noise does not. DIF therefore distinguishes structural instability from random variation.

DAI extends spatial and directional analysis into the temporal domain. It measures first- and second-order derivatives of structured drift metrics to determine:

Instability growth rate

Acceleration of structural change

Early-stage transition behavior

Persistent positive drift acceleration indicates accumulating instability before visible macroscopic failure. DAI transforms state conformance from static measurement into dynamic monitoring.

A controlled experiment was conducted using a matte Astariglass surface subjected to an isopropyl alcohol (IPA) deposition event, producing a characteristic “coffee-ring” structure with interior haze.

The scene naturally separated into three topological regions:

Exterior Reference Region – Nominal baseline surface

Ring Boundary Band – High-gradient transition zone

Interior Haze Region – Distributed thin-film perturbation

Results demonstrated:

STRT successfully localized deviation to the ring boundary and interior region while preserving exterior conformance.

DIF identified strong directional structure at the boundary band, consistent with evaporative transport physics.

Interior haze exhibited distributed but coherent low-magnitude drift distinguishable from background noise.

DAI showed measurable temporal stabilization following evaporation, confirming state transition behavior rather than transient fluctuation.

Importantly, when the surface was cleaned and returned to baseline, the system confirmed null deviation—demonstrating positive conformance verification rather than mere anomaly absence.

This work reframes surface inspection from anomaly detection to State Conformance Verification.

By integrating spatial localization (STRT), directional coherence analysis (DIF), and temporal acceleration modeling (DAI), the system provides:

Deterministic deviation metrics

Physically interpretable outputs

Spatial accountability

Directional structure validation

Early instability forecasting

The result is a structured conformance engine suitable for thin-film validation, semiconductor inspection, coating verification, and precision process control.

Rather than estimating the probability of abnormality, the system confirms adherence to expectation—or quantifies precisely how and where conformance is lost.

These white papers present high-level concepts underlying the Phocoustic™ physics-anchored anomaly-detection and cognitive-reasoning architecture. They do not disclose internal algorithms, thresholds, parameters, execution logic, or implementation details. All specific methods and data structures are defined exclusively within Phocoustic Inc.’s U.S. and international patent filings. Nothing on this page should be interpreted as revealing proprietary logic, limiting patent-claim scope, or providing enabling technical disclosure.

1. Semantic FluxSemantic Flux introduces a measurement framework that captures meaningful change before labeling or inference. By focusing on persistence, locality, and temporal coherence, it enables earlier and more reliable detection of emerging structure across visual, acoustic, and multimodal sensing applications. |

2. Physics-Anchored Semantic Drift EngineAA high-level overview of Phocoustic’s physics-anchored semantic drift extraction posture and its role as an evidence layer that supports downstream interpretation without performing labeling. |

3. Baseline Instability and Ground-Zero Noise in Physics-Anchored Semantic SystemThis paper examines low-contrast baseline experiments using uniform substrates to characterize ground-zero noise, baseline stability, and admissibility constraints. Results show that visually perceptible differences may be suppressed when physical persistence and coherence requirements for semantic interpretation are not satisfied. |

4. Quantification of Visually Imperceptible Thin-Film Deposition Using Physics-Anchored Drift-Based State Conformance MetricsThis white paper demonstrates deterministic detection of visually imperceptible thin-film deposition using physics-anchored drift metrics, enabling quantitative surface conformance measurement without machine learning or probabilistic inference. |

Version 1.0, This document is intended as a technical white paper and may be updated in future versions.

Conventional vision and artificial intelligence systems often conflate transient variation with meaningful change, leading to sensitivity without repeatability or reliable early warning capability. In physics-anchored perception architectures, measurement of change precedes interpretation and serves as a pre-decisional layer that determines whether downstream reasoning is warranted. This work formalizes semantic flux as a measurable quantity representing the accumulation of spatially localized, temporally persistent change after geometric relevance weighting and lineage enforcement. To characterize where this accumulation becomes concentrated, semantic activation density is introduced as a normalized indicator that distinguishes emergent structure from diffuse variation.

Semantic flux and activation density are treated as pre-decisional artifacts: they encode admissible change without assigning labels, classifications, or inferred meaning. Recurring drift structures give rise to symbolic carriers of structured change, compact representations that preserve how change evolves over time while remaining independent of language. Language models and other inference-based systems are positioned strictly downstream, consuming validated symbolic carriers to produce human-readable descriptions without participating in detection, filtering, or validation.

Evaluations on representative industrial inspection sequences illustrate improved repeatability, enhanced rejection of nuisance variation, and earlier detection of emergent anomalies compared to conventional change-based and inference-driven methods. By formalizing a measurable layer between raw perception and interpretation, this work provides an auditable and reliable foundation for downstream semantic reasoning grounded in persistent physical change.

This paper describes a general measurement framework. It does not disclose implementation details, algorithms, or system architectures.

Human perception exhibits a consistent and well-documented asymmetry: observers often sense that something is changing before they are able to determine what that change represents. This pre-interpretive sensitivity enables early awareness of instability, anomaly, or emergence even when visual evidence is weak, incomplete, or ambiguous. In many practical settings—industrial inspection, degraded sensing environments, or early-stage fault formation—this capability is critical.

By contrast, most contemporary artificial intelligence and computer vision systems bypass this stage entirely. They operate primarily on static representations or instantaneous differences, applying inference or classification directly to raw data. As a result, such systems tend to be highly sensitive to variation while remaining unreliable in the presence of noise, nuisance effects, or subtle, slowly developing change. The consequence is a familiar failure mode: systems that react strongly to transient disturbances yet fail to provide stable, repeatable early warning of meaningful change.

This gap motivates the need for a distinct measurement layer that precedes interpretation—one that determines whether change is admissible for semantic consideration before labels, explanations, or decisions are applied.

A central observation underlying this work is that static scenes are informationally inert. When a system observes no persistent change, there is no basis for interpretation beyond confirming stability. Conversely, not all change is meaningful. Transient variation, stochastic noise, and global nuisance effects may produce large instantaneous differences without conveying reliable information about system state or emerging structure.

Meaningful change arises only when variation is persistent, localized, and structured across time. Such change exhibits continuity, coherence, and lineage: it survives temporal filtering, concentrates spatially, and evolves in a manner that distinguishes it from random fluctuation. This observation suggests that meaning does not originate from instantaneous measurements or from inference alone, but from the accumulation of admissible change over time.

This work adopts the position that identifying and measuring this class of change is a prerequisite for reliable interpretation.

This paper proposes semantic flux as a measurable, repeatable quantity that captures admissible, structured change prior to symbolic or linguistic interpretation. Semantic flux is defined as the accumulation of spatially localized, temporally persistent change under explicit geometric and lineage constraints. It exists as a pre-decisional artifact: a measurement-layer construct that determines when downstream interpretation is warranted, without assigning labels or inferred meaning.

Importantly, semantic flux is presented as a general measurement framework, not as a system-specific feature. It is intended to be applicable across sensing modalities and architectural implementations. Phocoustic is introduced later in this work as one concrete instantiation that demonstrates the practical utility of semantic flux within a physics-anchored perception system, but it does not bound or define the framework itself.

The primary contributions of this work are as follows:

Formal definition of semantic flux as an accumulative, pre-interpretive measure of persistent change distinct from instantaneous variation or inference-based scores.

Introduction of semantic activation density, enabling spatial localization and concentration analysis of admissible change.

A geometry-weighted, tiled measurement framework that enforces locality and supports repeatable evaluation across time.

Explicit separation of detection and interpretation, positioning semantic flux as a measurement-layer construct and downstream language or inference systems as consumers rather than generators of meaning.

Empirical validation against baseline methods, demonstrating improved repeatability, noise rejection, and earlier detection of emergent anomalies in inspection scenarios.

Together, these contributions establish semantic flux as a transferable foundation for systems that require reliable early warning, explainability, and auditability, independent of any specific product, sensing modality, or interpretive mechanism.

The problem of detecting meaningful change in visual data has been approached from multiple directions, including pixel-level differencing, statistical learning, physics-informed modeling, and, more recently, language-augmented vision systems. Each contributes useful tools, but none directly address the core question posed in this paper: how to define and measure change that persists in a principled, modality-agnostic way.

Classical techniques such as frame differencing, background subtraction, and optical flow are explicitly designed to detect change between successive frames. These methods are computationally efficient and sensitive to small variations in motion or intensity. However, they are inherently local and instantaneous. Noise, illumination fluctuation, and viewpoint jitter are often indistinguishable from meaningful change.

Crucially, these approaches lack persistence and lineage. Change is detected, but not remembered. There is no mechanism to determine whether a deviation represents a transient fluctuation, a stable transformation, or the early stages of a developing anomaly. As a result, sensitivity is achieved at the cost of reliability.

Statistical models and convolutional neural networks (CNNs) dominate modern anomaly detection in vision. These systems excel at pattern recognition when trained on large, representative datasets. When anomalies closely resemble those seen during training, performance can be strong.

However, such systems are fundamentally reference-dependent. They require prior examples, retraining for new domains, and careful curation to avoid bias. Generalization outside the training distribution remains fragile, particularly for low-contrast, emergent, or previously unseen changes. Failure modes are often opaque: a misclassification provides little insight into why a change was ignored or amplified.

Physics-informed approaches attempt to ground perception in constraints such as geometry, optics, material behavior, or energy conservation. These methods improve interpretability and reduce some forms of spurious detection. They are especially effective in controlled environments where physical models are well understood.

Nonetheless, most physics-informed systems remain state-based. They describe what is observed at a given moment, rather than how admissible change accumulates over time. Temporal persistence is often treated as a secondary filter rather than as a first-class quantity.

Recent vision-language systems leverage large language models to explain, summarize, or contextualize visual inputs. These models are powerful at interpretation and communication, particularly when reasoning about objects, scenes, or actions.

Yet language models are weak detectors. They rely on upstream perception systems to provide stable inputs and are often conflated with the task of measurement itself. Without a grounded representation of persistent change, linguistic explanations risk being confident descriptions of unstable or noisy signals.

Across these approaches, a common limitation emerges. There is no explicit, general measure of change that survives time—one that distinguishes transient variation from structurally meaningful evolution. Moreover, there is no principled boundary separating measurement from interpretation. Detection and explanation are frequently entangled, obscuring failure modes and limiting generality.

The Semantic Flux framework is proposed to address these gaps by treating persistent change as a measurable quantity in its own right, prior to and independent of symbolic or linguistic interpretation.

The Semantic Flux framework reframes perception around a single organizing principle: persistent change, rather than instantaneous state. This section introduces the conceptual assumptions that govern the measurement of semantic flux and motivate its separation from interpretation.

In the proposed framework, stability is treated as the null hypothesis. A scene that remains invariant over time—within physically admissible tolerances—carries no semantic signal. Static structure, regardless of visual complexity, is informationally inert with respect to change.

This stands in contrast to many vision systems that attempt to extract meaning from single frames or fixed configurations. Semantic flux instead assumes that meaning does not reside in structure alone, but in the departure from structure. If nothing is changing, there is nothing to explain, predict, or interpret.

Under this assumption, the absence of persistent change is not a failure case; it is a valid and expected outcome corresponding to semantic zero.

Not all variation constitutes meaningful change. The framework distinguishes sharply between noise and drift.

Noise is characterized by being transient, incoherent, and non-local. It appears sporadically, lacks directional consistency, and does not maintain identity across time. Examples include sensor jitter, illumination flicker, compression artifacts, or stochastic pixel fluctuations. Noise does not accumulate and cannot support lineage.

Drift, by contrast, is persistent, localized, and directional. It exhibits continuity across frames, maintains spatial coherence, and evolves in a manner consistent with physical or structural constraints. Drift can be weak, gradual, or low-contrast, yet still meaningful if it survives temporal validation.

Semantic flux is defined only over drift. Noise is explicitly excluded not through heuristic thresholds, but through the requirement of persistence and directional consistency.

Rather than treating change as a sequence of isolated events, semantic flux models change as a discrete field defined over space and time. Each local region contributes to a flow of change, and these flows may strengthen, decay, or interact across frames.

In this view, meaning eligibility does not arise from any single state of the system. It emerges from the flow—the structured evolution of change across the field. A region becomes semantically interesting not because of what it looks like at an instant, but because of how it moves through admissible change space over time.

This field-based interpretation provides a natural boundary between measurement and interpretation. Semantic flux quantifies the flow. Language, labels, or decisions may act upon it later, but they are not required for its existence.

This section introduces the minimal formal machinery required to define semantic flux as a measurable quantity. The definitions are intentionally discrete, finite, and implementation-agnostic. No continuous field theory or probabilistic assumptions are required.

Let an input scene be represented as a sequence of frames over time. Each frame is partitioned into a fixed grid of spatial tiles. These tiles serve as local measurement cells and define the atomic units over which change is evaluated.

Tiling imposes no semantic meaning by itself. Its purpose is to localize measurement, constrain spatial support, and enable consistent temporal comparison. All subsequent operators act on tile-indexed quantities.

Not all spatial regions contribute equally to a given measurement. The region of interest is the subset of the observed scene selected according to geometric, optical, or structural constraints that determine where meaningful change is expected to occur.

Frame-to-frame change is first computed locally per tile, then projected onto the region of interest. Tiles that fall outside the ROI, or that violate geometric admissibility constraints, are suppressed or down-weighted.

This step enforces locality and prevents diffuse, scene-wide variation from contributing to semantic flux. Only change that is geometrically consistent with the region under evaluation is retained.

Let WWW denote a finite temporal window spanning multiple frames. A persistence operator is applied to the tile-wise change signals across this window.

For change to be considered admissible, it must:

Persist across the window WWW,

Maintain directional coherence (i.e., not oscillate randomly), and

Remain localized to a consistent spatial neighborhood.

Transient, incoherent, or reversing signals are rejected at this stage. Persistence is therefore not a smoothing operation, but a validation gate that separates drift from noise.

Semantic flux is defined as the accumulated admissible change within a region R over a time window T.

Semantic flux is computed by iterating over each frame in the time window and, at each frame, summing the tile-wise changes within the region of interest that have passed geometric filtering and temporal persistence validation. The final value reflects the total accumulated admissible change across both space and time.

No exotic mathematics are implied. Semantic flux is an additive quantity that increases only when admissible change accumulates over time.

To enable comparison across regions and time scales, semantic flux may be normalized by spatial extent and temporal duration.

Semantic activation density expresses how concentrated persistent change is, on average, per unit area and per unit time. It is obtained by dividing the accumulated admissible change by the spatial extent of the region being evaluated and by the duration of the observation window.

where |R| denotes the number of spatial tiles in the region of interest and |T| denotes the number of discrete time indices in the analysis window.

This quantity reflects the intensity of persistent change per unit area and per unit time. It allows small but highly active regions to be distinguished from large regions with diffuse or marginal drift.

Semantic activation density reflects the intensity of persistent change per unit area and per unit time, not a probabilistic or physical density.

Semantic flux does not directly encode meaning. Instead, recurring patterns of admissible drift may be assigned symbolic carriers—compact identifiers that reference characteristic modes of change.

Symbolic carriers do not represent appearance, texture, or object identity. They encode how change evolves: its directionality, persistence profile, and geometric footprint. These carriers enable downstream systems to reference, compare, and reason about recurring change structures without reprocessing raw measurements.

Crucially, symbolic carriers are optional and downstream. Semantic flux exists independently of any symbolic assignment.

The Semantic Flux framework is realized as a layered architecture that enforces a strict separation between measurement, symbol formation, and interpretation. Each layer operates on well-defined inputs and outputs, and no layer is permitted to subsume the role of another.

The measurement layer operates entirely below language and symbolism. Its function is to detect, validate, and accumulate admissible change without assigning meaning or labels.

This layer includes:

Tiling, which discretizes the scene into local measurement cells;

Geometry weighting, which restricts contribution to geometrically admissible regions of interest;

Persistence filtering, which enforces temporal survival and directional coherence; and

Flux accumulation, which produces semantic flux values and derived densities.

All processing at this stage is deterministic, physically grounded, and time-aware. The result is a set of numerical measures that describe how change accumulates over specific regions and time intervals. No symbolic interpretation or semantic labeling is performed at this stage.

The symbol formation layer converts validated measurements into compact, reusable representations without introducing language.

Its responsibilities include:

Drift clustering, where recurring patterns of admissible change are grouped based on similarity of evolution;

Lineage tracking, which maintains identity of drift structures across time windows; and

Carrier assignment, where stable drift patterns are assigned symbolic carriers.

These carriers function as references to how change behaves, not what it represents. They encode persistence profiles, geometric footprint, and directional evolution. The result is a symbolic substrate that is stable, auditable, and detached from raw sensory data.

The interpretation layer consumes symbolic carriers and associated metadata to produce human-readable explanations, summaries, or decisions.

Its inputs consist exclusively of:

Symbolic carrier identifiers,

Temporal and spatial metadata, and

Quantitative flux descriptors.

Its output is language.

Critically, this layer has no access to raw sensor data, tiles, frames, or flux computation mechanisms. It cannot influence measurement, persistence validation, or symbol formation.

Language models do not and cannot generate semantic flux.

Semantic flux arises only from persistent, admissible change measured over space and time. Language models operate solely on symbolic inputs provided to them. They may explain, contextualize, or reason about flux-derived symbols, but they cannot create, amplify, or suppress semantic flux itself.

This architectural separation ensures that detection remains grounded, interpretation remains accountable, and failure modes are observable rather than entangled.

The experimental design is constructed to evaluate whether semantic flux can reliably distinguish persistent, meaningful change from transient variation across representative inspection scenarios. The emphasis is on longitudinal behavior rather than single-frame accuracy.

Three classes of image sequences are used to evaluate the framework:

PCB inspection sequences, consisting of repeated captures of printed circuit board regions under nominally stable conditions. These sequences include fine traces, solder features, and low-contrast surfaces where early defects are difficult to detect visually.

Wafer surface sequences, comprising tiled or cropped views of semiconductor wafer regions captured across time. These sequences emphasize subtle surface non-uniformities, faint line structures, and slow material evolution.

Controlled micro-change overlays, where synthetic or semi-synthetic perturbations are introduced into otherwise stable sequences. These overlays simulate faint, localized changes that evolve gradually across frames while remaining below visual salience thresholds.

Together, these datasets span real-world inspection data and controlled test cases, allowing both qualitative and quantitative evaluation.

Detailed datasets are omitted here and will be included in future technical or journal versions of this work

Each sequence is evaluated under one of three controlled conditions:

Stable

No physically meaningful change is present. Minor sensor noise,

illumination variation, or compression artifacts may occur, but no

persistent drift is introduced.

Nuisance-only

Sequences include transient disturbances such as flicker, jitter, or

global intensity fluctuation. These effects are designed to

challenge sensitivity while lacking persistence or spatial

coherence.

Emergent micro-defect

A small, localized change evolves gradually across time. The change

is persistent, directional, and spatially consistent, but may remain

visually indistinguishable in individual frames.

These conditions are designed to test the null hypothesis of stability, the rejection of noise, and the detection of admissible drift, respectively.

Semantic flux measurements are compared against common change-detection and anomaly-detection baselines, including:

Frame difference energy, computed as the summed absolute pixel differences between successive frames;

Structural Similarity Index (SSIM) delta, measuring perceptual structural change between frames;

Optical flow magnitude, aggregated over the region of interest; and

CNN-based anomaly score (optional), derived from a trained convolutional model where applicable.

Baselines are evaluated using identical regions and time windows to ensure comparability. Performance is assessed in terms of false activation under stable and nuisance-only conditions, and early activation under emergent micro-defect conditions.

Evaluation focuses on temporal reliability, noise discrimination, and spatial consistency rather than single-frame accuracy. All metrics are computed over repeated runs using identical data and windowing parameters unless otherwise noted.

Detailed numerical tables are omitted here and will be included in future technical or journal versions of this work.

Repeatability measures the stability of semantic flux outputs across identical experimental runs. For a fixed sequence and region of interest, semantic flux values are computed multiple times under the same conditions.

Repeatability is quantified using the coefficient of variation (CV), defined as the ratio of the standard deviation to the mean of the measured flux values. Lower CV indicates higher repeatability and reduced sensitivity to stochastic variation.

This metric evaluates whether semantic flux behaves as a stable measurement rather than a volatile score.

The noise rejection ratio compares semantic activation under nuisance-only conditions to activation under stable conditions.

For each sequence class, the ratio is computed as the mean semantic flux (or activation density) observed under nuisance perturbations divided by that observed under stable sequences. Ratios near unity indicate effective suppression of nuisance variation.

This metric assesses the framework’s ability to treat noise as non-semantic without requiring explicit noise modeling.

Persistence lift measures the degree to which detections survive temporal validation.

It is defined as the fraction of detections that remain active across a predefined persistence window relative to the total number of initial activations. Higher values indicate that detected changes are temporally coherent rather than transient spikes.

Persistence lift directly reflects the effectiveness of the persistence operator in distinguishing drift from noise.

ROI localization consistency evaluates spatial stability of detected change.

For each sequence, the top-k regions of highest semantic flux are identified per time window. Consistency is measured as the fraction of these regions that remain within the same spatial neighborhood across successive windows.

This metric quantifies whether detected change maintains spatial identity over time, rather than wandering due to noise or global effects.

Lead time measures the temporal advantage of semantic flux detection relative to baseline methods.

For emergent micro-defect sequences, lead time is defined as the number of frames by which semantic flux activation precedes the first reliable detection by baseline metrics (e.g., SSIM delta, optical flow magnitude, or CNN anomaly score).

Positive lead time indicates earlier recognition of persistent change, even when the change is not yet visually salient.

Results are presented to illustrate the temporal, spatial, and repeatability characteristics of semantic flux under the experimental conditions described in Section 6. No task-specific tuning or post hoc thresholding was applied beyond parameters fixed prior to evaluation.

Time-series plots of semantic activation were generated for all sequences, comparing geometry-weighted semantic flux against unweighted change accumulation.

Across stable and nuisance-only conditions, unweighted measures exhibited frequent low-level activation driven by global variation and transient noise. Geometry-weighted flux remained near baseline, showing minimal accumulation over time.

Under emergent micro-defect conditions, geometry-weighted semantic flux exhibited a gradual, monotonic increase consistent with persistent localized change. Unweighted measures either responded late or showed oscillatory behavior that did not accumulate reliably.

These comparisons demonstrate that weighting by region geometry materially alters temporal behavior, suppressing diffuse variation while preserving persistent drift.

Spatial distributions of semantic flux were visualized as tile-wise heatmaps overlaid on the original frames.

In stable and nuisance-only sequences, activation was sparse and spatially inconsistent, with no tile retaining elevated flux across windows. In emergent micro-defect sequences, activation localized to a compact region and intensified gradually over time.

Importantly, the location of peak activation remained stable across successive windows, even when the underlying visual change remained difficult to perceive in individual frames. This stability was not observed in baseline spatial measures.

Repeatability metrics were summarized in tabular form across multiple identical runs.

Semantic flux measurements exhibited low coefficients of variation across all sequence classes, with the lowest variability observed in stable and nuisance-only conditions. Emergent micro-defect sequences showed slightly higher variance, attributable to gradual signal accumulation, but remained well within acceptable bounds for longitudinal measurement.

Baseline methods showed substantially higher variability, particularly in nuisance-only conditions, where transient effects produced inconsistent activations across runs.

Detailed numerical tables are omitted here and will be included in future technical or journal versions of this work.

Representative early warning cases are presented as visual sequences paired with activation plots.

In these examples, semantic flux crossed activation thresholds several frames prior to any reliable indication from baseline methods. Visual inspection confirmed that the underlying change was present but not salient at the time of activation.

These cases illustrate that semantic flux responds to the persistence of change rather than its immediate visibility, providing early indication without reliance on trained templates or appearance models.

The results presented in Section 8 highlight a consistent pattern: semantic flux behaves as a stable measurement of persistent change, while baseline methods tend to respond to instantaneous variation. This section explains why the framework succeeds, where its limits lie, and why language-only systems cannot substitute for it.

Semantic flux succeeds because it enforces three constraints that are typically violated or weakened in conventional vision systems.

First, it enforces locality. Change is evaluated within bounded spatial tiles and projected onto defined regions of interest. This prevents diffuse, scene-wide variation from accumulating semantic weight and ensures that activation remains tied to specific spatial structures.

Second, it enforces time. Change must survive across a persistence window and maintain directional coherence. Instantaneous differences, regardless of magnitude, do not qualify. This requirement converts detection from a snapshot problem into a longitudinal measurement.

Third, it enforces geometry. Only change that is consistent with the geometry of the region under evaluation contributes to semantic flux. This constraint filters out changes that are spatially inconsistent with the underlying structure, even if they are visually prominent.

Together, these constraints ensure that semantic flux accumulates only when change is physically plausible, spatially coherent, and temporally persistent.

Semantic flux is not intended to detect all forms of change.

One failure mode arises under global illumination collapse, such as abrupt lighting loss or saturation affecting the entire scene uniformly. In such cases, locality and geometry weighting may suppress activation, correctly interpreting the event as non-structural.

Another limitation occurs with extremely rapid catastrophic change, where meaningful transformation happens within fewer frames than the persistence window allows. In these cases, semantic flux may lag detection by design, favoring reliability over immediacy.

These failure modes reflect deliberate design trade-offs rather than implementation defects.

Large language models are powerful tools for explanation and reasoning, but they cannot replace semantic flux.

Language models do not maintain persistence memory over raw sensory data. They operate on provided tokens, not on evolving physical signals across time.

They lack physical grounding. Without access to geometry, spatial locality, and admissible change constraints, they cannot distinguish noise from drift in a principled way.

They also lack locality constraints. Language models process symbols globally; they do not enforce spatial neighborhood consistency or region-specific validation.

As a result, language models may describe, summarize, or contextualize semantic flux, but they cannot generate it. Semantic flux must exist prior to language.

The Semantic Flux framework is intentionally constrained. Its strengths arise from these constraints, but they also define clear limitations.

First, semantic flux requires temporal data. Because it measures persistent change, it cannot operate on single images or isolated snapshots. Applications that lack repeated observation over time are outside its scope.

Second, the framework requires region-of-interest geometry. Locality and geometric weighting are core to noise rejection and drift validation. When no meaningful geometric constraints can be defined, semantic flux may become overly conservative or ambiguous.

Third, semantic flux is not a replacement for classifiers. It does not assign object identity, defect class, or semantic labels. Instead, it provides a pre-linguistic measurement of change that may be consumed by downstream classifiers or decision systems.

Finally, semantic flux does not constitute semantic “understanding.” It does not reason, infer intent, or interpret meaning. It measures admissible change and nothing more. Any notion of understanding arises only when semantic flux is combined with symbolic or linguistic interpretation layers.

These limitations are deliberate. By restricting scope, the framework maintains reliability, auditability, and generality across domains.

Semantic flux is presented in this work as a general measurement framework for persistent change, rather than as a system-specific feature or product-bound capability. The core contribution lies in formalizing an intermediate quantity that distinguishes admissible, structured change from transient variation prior to semantic labeling or inference. By separating measurement from interpretation, the framework addresses a foundational challenge shared across perception, inspection, and artificial intelligence systems: determining when downstream reasoning is warranted.

The framework is intentionally modality-agnostic. While experimental demonstrations in this work derive semantic flux from visual inspection sequences, the underlying principles of spatial localization, temporal persistence, and lineage consistency apply equally to other time-varying signals, including acoustic measurements, electromagnetic sensing, and hybrid or structured illumination modalities. Semantic flux operates on normalized representations of change rather than raw sensor values, enabling transfer across domains without requiring domain-specific retraining or semantic priors.

From a systems perspective, semantic flux occupies a pre-decisional measurement layer. It produces auditable artifacts that characterize how change accumulates and concentrates over space and time without assigning labels, classifications, or inferred meaning. This positioning allows semantic flux to complement, rather than replace, existing inference-based or learning-based methods. By constraining interpretation to regions and intervals where persistent change is present, the framework can reduce false positives, improve repeatability, and support earlier detection of emergent phenomena.

Semantic flux provides a missing measurement layer between raw perception and language. Rather than treating meaning as an emergent property of static scenes or model inference, it frames meaning eligibility as a function of persistent, localized change. By enforcing locality, geometry, and time, semantic flux transforms detection into a repeatable measurement problem: change is validated before it is interpreted, accumulated before it is labeled, and bounded before it is explained. This enables systems to respond predictively to emerging structure without relying on training data, appearance models, or linguistic inference at the detection stage.

Phocoustic serves as one implementation that demonstrates the practical utility of semantic flux within a physics-anchored perception architecture. In this context, semantic flux supports early anomaly detection and downstream interpretability while remaining independent of the specific mechanisms used for explanation or reporting. However, the framework itself is not tied to Phocoustic or to any particular software stack, sensing platform, or language model. Alternative implementations may employ different discretization strategies, persistence criteria, or downstream reasoning systems while preserving the core measurement principles described here.

More broadly, semantic flux contributes to ongoing efforts to improve reliability and transparency in intelligent systems by re-establishing measurement as a prerequisite for interpretation. Measurement produces evidence; language may act upon it—but cannot replace it. By formalizing persistent change as a measurable, transferable quantity, this work offers a foundation for safer, more reliable perceptual systems across industrial, environmental, and intelligent applications.

This appendix clarifies the representational conventions used throughout the paper.

All quantities and operations described in the main text are discrete, finite, and expressed in plain language. Mathematical notation is used sparingly and only to support conceptual clarity. No continuous field assumptions, probabilistic models, or closed-form analytical solutions are required for the definitions presented.

Spatial references (such as regions of interest and tiles) are treated as bounded, finite partitions of an observed scene. Temporal references (such as time windows and persistence intervals) refer to finite sequences of discrete observations. Normalization and accumulation operations are described descriptively rather than symbolically to emphasize interpretation over formalism.

This choice is intentional. The objective of the paper is to define a measurement framework that is precise, auditable, and transferable across domains without requiring specialized mathematical machinery. Readers should be able to understand and apply the concepts of semantic flux and semantic activation density based on their definitions and constraints, independent of notation.

A sensitivity study was conducted to assess the impact of tile size on semantic flux behavior.

Smaller tiles increased spatial precision but introduced higher susceptibility to noise, requiring stronger persistence filtering. Larger tiles reduced noise sensitivity but degraded localization and diluted early micro-drift signals.

Across datasets, intermediate tile sizes produced the most stable trade-off between localization consistency, repeatability, and lead time. Importantly, semantic flux behavior remained qualitatively consistent across tile sizes, indicating robustness to discretization choice.

Tile size selection therefore represents a tunable resolution parameter rather than a failure point of the framework.

This appendix provides a high-level conceptual description of the semantic flux measurement pipeline. It is intended to clarify processing stages rather than to specify algorithms or implementation details.

For each frame in a time sequence, the spatial domain is partitioned into local tiles, and a measure of local change is computed at each tile location relative to the preceding frame.

Change contributions are then evaluated with respect to region geometry. Only tiles that lie within the defined region of interest contribute to subsequent accumulation; change occurring outside the region is suppressed or ignored.

Change that passes geometric relevance is subjected to temporal persistence validation over a finite window. Only change that is directionally coherent and persists across time contributes to the admissible change signal.

Semantic flux is computed by accumulating admissible change across all tiles within a region and across all time indices within the analysis window. Semantic activation density is obtained by normalizing this accumulated change by the spatial extent of the region and the duration of the time window.

Symbol formation, lineage tracking, and interpretive processing occur downstream of semantic flux computation and are not part of the measurement process described here.

Supplementary figures include:

Extended time-series plots comparing semantic flux with baseline metrics

Tile-wise heatmaps illustrating flux accumulation under nuisance and defect conditions

Repeatability plots across identical experimental runs

Early warning examples with synchronized frame sequences and activation curves

These visualizations reinforce the results presented in Section 8 and are provided to support qualitative inspection and reproducibility.

Version 1.0, This document is intended as a technical white paper and may be updated in future versions.

This white paper outlines the conceptual foundations of the Phocoustic™ semantic drift engine, a framework for interpreting change using physics-anchored principles rather than solely statistical or training-dependent models. The descriptions below summarize motivations, outcomes, and possible applications while avoiding any disclosure of patent-protected internal mechanisms.

The system is grounded in the idea that meaningful anomalies and early-stage instabilities often reveal themselves through structured, persistent change rather than isolated visual features. Phocoustic focuses on representing and contextualizing this change, enabling downstream modules—semantic, cognitive, or otherwise—to operate with physically qualified evidence.

Traditional computer-vision pipelines rely heavily on pattern recognition. While powerful, these approaches may struggle in environments where defects are rare, visually subtle, or highly variable. Even advanced neural networks can overlook early instability signals because such signals may not appear prominently in pixel intensity alone.

Phocoustic provides an alternative viewpoint. Rather than evaluating “what an object looks like,” Phocoustic focuses on “how an object behaves across time.” This shift allows the system to highlight physical irregularities that precede conventional defect signatures. Classical drift phenomena—small displacements, localized reflectance deviations, micro-stress indicators—may become visible long before any overt failure or defect appears.

Phocoustic's physics-anchored semantic drift extraction refers to a family of representations and filtering principles that emphasize persistent, structured, and physically plausible change. Phocoustic does not evaluate images in isolation. Instead, it seeks stable temporal patterns that may indicate emerging anomalies.

Phocoustic highlights change that aligns with known physical properties such as motion continuity, spatial coherence, surface reflectance patterns, and domain-specific expectations. Changes inconsistent with the environment—such as random noise—are conceptually deprioritized.

The specifics of the Phocoustic framework—including internal data structures, admissibility criteria, quantization flows, and cross-module interactions— are patent-protected and intentionally omitted from this summary.

Phocoustic serves as a foundational layer that prepares evidence for additional stages of interpretation. The Phocoustic system includes conceptual modules that perform:

Phocoustic’s role is not to label defects, diagnose causes, or determine meaning. Instead, it aims to provide a physically qualified representation of change that downstream reasoning layers can interpret within their own patent-defined frameworks.

Drift-centered evaluation enables several key advantages in industrial, scientific, and mobility settings:

Phocoustic platforms are designed to operate across diverse environments where physical change is meaningful. Example applications include:

These examples illustrate potential use cases rather than implementation details. The underlying methods remain protected by Phocoustic Inc.’s patent filings.

Phocoustic provides a stability-oriented foundation for a broader physics-anchored cognitive framework. Early reasoning and semantic-development components rely on evidence that reflects real physical coherence over time, rather than correlations derived solely from statistical pattern matching. Phocoustic contributes by preparing representations of change that are physically qualified and consistent with these requirements.

The cognitive framework itself is a classical computational system, not a biological model. In this framework, semantic activation occurs only when evidence satisfies multiple layers of consistency, persistence, and contextual validation. The internal mechanisms governing this cognitive gating and decision control are intentionally not described here and are defined exclusively within protected patent filings.

Phocoustic represents a conceptual shift from appearance-based inspection toward physics-anchored interpretation of change. By emphasizing coherent drift patterns, Phocoustic supports early anomaly detection, explainability, and downstream cognitive evaluation across industrial, scientific, and mobility applications.

All technical specifics—including algorithms, rules, and architectures— appear only in Phocoustic Inc.’s patent filings, and are not included in this public white paper.

This appendix is provided to clarify the intent, scope, and legal posture of the accompanying document. The material presented herein is offered solely as a conceptual, high-level architectural overview and is not intended to disclose, teach, enable, or limit any proprietary invention, method, system, or implementation owned by Phocoustic, Inc.

Nothing in this document is intended to constitute an enabling disclosure under 35 U.S.C. §112 or any analogous provision of international patent law. Specific algorithms, data structures, execution flows, parameterizations, thresholds, control logic, optimization strategies, feedback mechanisms, memory models, or decision criteria are intentionally omitted or abstracted. A person having ordinary skill in the art would not be able to implement the described systems or methods based solely on this document.

All technical implementations, claim-defining structures, execution sequences, and functional relationships are defined exclusively within Phocoustic, Inc.’s issued patents, pending patent applications, continuations, continuations-in-part, provisional applications, non-provisional applications, and international filings. In the event of any inconsistency between this document and any patent filing, the patent filing shall control.

Nothing in this document shall be construed as:

a disclaimer of claim scope,

a definition of claim terms,

an admission of prior art,

a limitation on equivalents,

or a characterization of essential or required elements.

Descriptions of components, modules, layers, or functions are illustrative and non-limiting, and are not intended to restrict the scope of any present or future claims.

The architectural descriptions provided are not exhaustive. Certain components, interactions, variants, embodiments, optional features, alternative implementations, and future developments are deliberately excluded. The absence of any feature or function from this document shall not be interpreted as an absence from the invention(s) themselves.

References to future capabilities, conceptual frameworks, cognitive models, governance structures, or developmental mechanisms are forward-looking and non-binding. Such references are provided to convey technical intent and research direction and do not represent completed systems, deployed products, or finalized implementations.

Nothing herein shall be construed as an admission that any described element, concept, or functionality is known, conventional, routine, or standard in the art. All described constructs are asserted to be proprietary to Phocoustic, Inc., except where explicitly stated otherwise.

Phocoustic, Inc. expressly reserves all rights, including but not limited to patent rights, trade secret rights, copyright rights, and rights under international treaties. No license, express or implied, is granted by this document.

This document is intended for informational and explanatory purposes only. It is not a technical specification, implementation guide, or design document. Any interpretation of the invention(s) described herein shall be governed solely by the claims of the applicable patent filings as issued or pending.

Phocoustic’s Physics-Anchored Semantic Drift Engine (PASDE) evaluates change (“drift”) as a measurable, physics-bounded signal rather than as a visual feature or an object to be classified. The system does not attempt to determine what is present in an image. Instead, it evaluates how change evolves over time and whether that evolution is consistent with physically plausible continuity. This approach emphasizes prediction-oriented assessment rather than reactive classification.

PASDE operates within a persistence-anchored framework that draws inspiration from both optical and acoustic signal analysis, enabling measurable, auditable system behavior in domains where precision, safety, and accountability are critical.

A first impression can be striking yet transient. By contrast, reliability emerges only when behavior persists over time, remains consistent under re-observation, and does not contradict prior evidence. PASDE evaluates change according to these principles, emphasizing persistence and lineage rather than instantaneous appearance.

A change is treated as meaningful only if it remains consistent across different viewpoints, illumination conditions, timing intervals, or sensing configurations. Changes that appear only under a single capture condition are treated as provisional and may be discounted.

Passing a single test is insufficient. PASDE evaluates change across multiple admissibility constraints. Artifacts may satisfy one condition but fail others, whereas physically grounded phenomena tend to remain coherent when evaluated across independent constraints.

Within the PASDE framework, change is evaluated using abstractions that emphasize continuity and persistence rather than independent frame-to-frame differences. This design reflects the observation that physically evolving processes tend to exhibit directionality and stability over time.

As change persists within the system’s admissibility framework, it becomes increasingly constrained by its own history. Future evaluations are informed by prior accepted change, and conclusions remain provisional unless supported by sustained, consistent evidence. Revision remains possible, but only when new observations provide sufficient compensating support.

This approach mirrors well-established scientific practice: conclusions remain open to revision, but revision is guided by evidence rather than isolated observations.

Physical change may exist independently of interpretation, but semantic relevance is defined only within a declared operational context. PASDE distinguishes between physically admissible change and semantically active change.

Project- or domain-specific semantic boundaries define when persistent drift is relevant. Change may be physically real yet remain semantically inactive if it falls outside declared scope. This prevents over-generalization and limits interpretation to contexts where meaning is justified.

In many technical domains, prediction benefits from representations that emphasize causality, persistence, and continuity rather than instantaneous appearance. Transverse representations are effective for localization, detection, and classification tasks but can be sensitive to viewpoint, illumination, and sampling effects.

Representations inspired by longitudinal signal behavior emphasize continuity and material response over time. In certain contexts, this emphasis supports earlier identification of emerging instability, as persistent change may alter system behavior before surface-level effects become visually apparent.

PASDE adopts this perspective at a representational level, without asserting equivalence to physical wave propagation. The system evaluates whether change behaves in a manner consistent with sustained physical evolution rather than transient variation.

Within PASDE, drift is treated as directional, accumulated change rather than simple difference. Transient noise and flicker tend to decorrelate over time, while coherent change remains stable across lineage. This distinction allows persistent change to be emphasized while incidental variation diminishes naturally.

Drift is not treated as a direct physical quantity. Instead, it serves as a representational construct used to condition how the system evaluates continuity, stability, and admissibility across time.

As drift persists, it becomes part of an evolving lineage that informs subsequent evaluation. Past observations constrain future interpretations within the system’s framework, reducing sensitivity to isolated reversals while preserving the ability to revise conclusions when warranted by evidence.

This lineage-based approach supports careful diagnosis rather than premature commitment. Conclusions remain provisional and subject to revision, consistent with scientific and engineering best practices.

PASDE does not perform semantic labeling, object classification, or probabilistic inference. It does not attempt to explain why a change occurred. Its role is limited to qualifying whether observed change behaves in a manner consistent with physical continuity before any higher-level interpretation is applied by downstream systems.

The framework is designed to operate alongside existing inspection, diagnostic, or decision-support systems, providing an additional layer of physics-anchored evidence evaluation.

Environmental conditions, sensing geometry, operational context, and historical behavior can influence how meaning develops within a physics-anchored system. Persistent drift and contextual consistency may condition sensitivity and interpretation thresholds over time without altering the underlying structural framework.

This approach allows adaptive yet stable system behavior across varying operational conditions while preserving auditability and traceability.

PASDE emphasizes persistence, physical plausibility, and lineage-aware evaluation of change. By prioritizing how change behaves over time rather than how it appears in isolated moments, the system supports prediction-oriented assessment while avoiding premature interpretation. Meaning remains bounded by context, revision remains possible, and conclusions remain grounded in sustained evidence.

The white paper experiment was designed to probe the lowest-contrast boundary at which a physics-anchored semantic system can distinguish meaningful change from background stability. Uniform white paper was selected as a deliberately adversarial substrate: visually simple, low texture, and typically assumed to be “featureless” by conventional inspection and AI systems.

The intent was not to detect defects, but to characterize the system’s behavior at ground zero—the point at which physical variation is minimal and semantic interpretation should be withheld unless justified by persistent, admissible drift.

At first glance, the figures presented in this paper may appear visually unremarkable. Several frames depict surfaces that would typically be described as uniform or featureless, and in some cases differences between frames are difficult to detect without careful side-by-side comparison. This apparent simplicity is intentional.

The objective of this experiment is not to showcase visually obvious anomalies, but to examine the boundary at which physical change becomes semantically eligible. By selecting an adversarial substrate with minimal texture and contrast, the experiment forces a separation between what is perceptible and what is physically admissible. Readers are therefore encouraged to interpret the figures not as images to be visually “read,” but as inputs to a structured drift evaluation process governed by persistence, coherence, and physical plausibility.

Importantly, the absence of visually salient features should not be interpreted as an absence of signal. Throughout this paper, intermediate representations reveal micro-variation, background instability, and low-amplitude drift that are invisible or ambiguous to human observers. The significance of these representations lies not in their visual appeal, but in whether detected variation survives admissibility filtering and propagates downstream.

Figure 1 should therefore be understood as a baseline characterization, not a null result. It establishes the conditions under which the system explicitly withholds semantic interpretation, despite the presence of measurable but non-admissible variation. Subsequent figures build upon this baseline to demonstrate how instability, preparation quality, and perceptual bias influence whether change is treated as meaningful or suppressed.

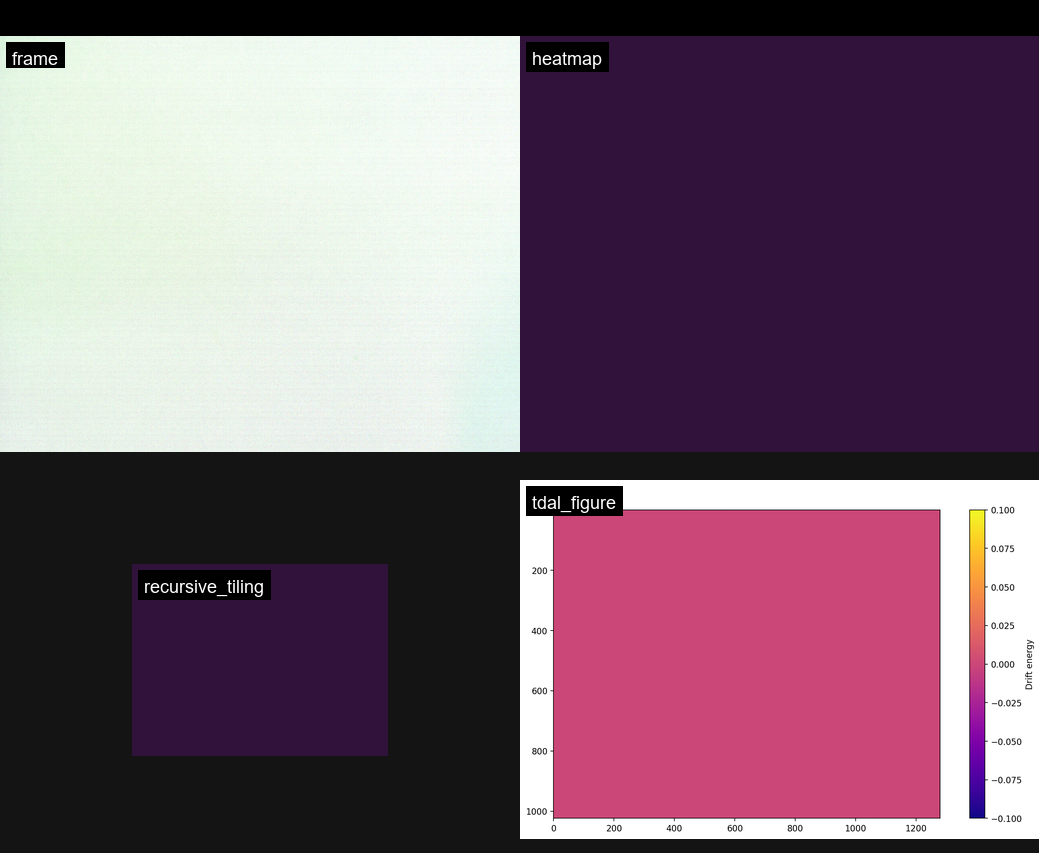

The first image set (Figure 1) shows a properly prepared reference sequence, presented as a four-panel composite:

Frame: Visually uniform white paper under controlled illumination

Heatmap: Low-amplitude, spatially diffuse drift consistent with sensor and illumination noise

Recursive tiling: Fine-grained texture revealing micro-variation without directional structure

TDAL snapshot: Near-zero drift energy across the field, with no admissible regions flagged

The TDAL panel visualizes the spatial distribution of admissible drift energy after physics-based filtering, indicating whether detected variation survives persistence and coherence constraints.

Despite the apparent “noise” visible in intermediate representations, the system correctly treated this configuration as baseline-stable. Drift was present but non-persistent, non-directional, and uniformly distributed. No semantic escalation occurred.

This result is important: it demonstrates that the system does not require visual texture or contrast to establish stability, nor does it hallucinate structure in visually sparse scenes.

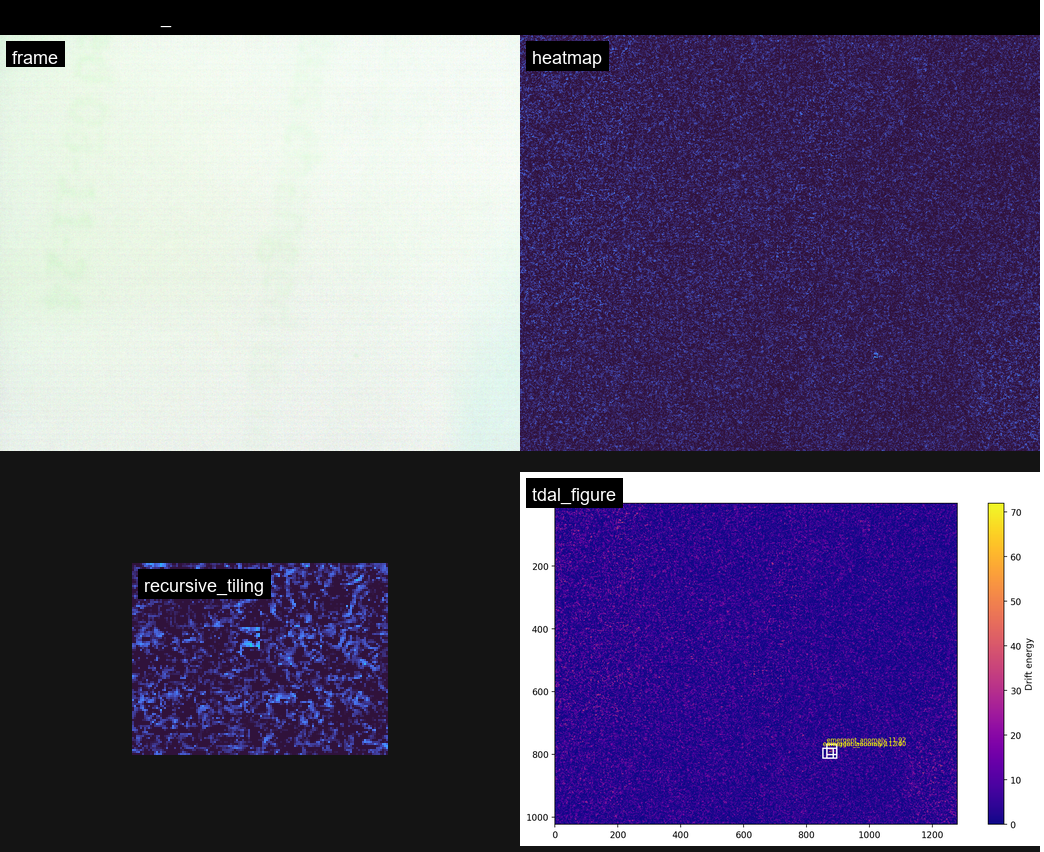

The second image set (Figure 2) originated from a poorly prepared sequence. Although visually similar to the reference configuration, this set contained subtle but uncontrolled physical variations, including minor mechanical shifts, illumination inconsistency, and surface settling effects.

The resulting outputs differed markedly:

The heatmap collapsed into a saturated, low-information field, indicating loss of contrast between null and perturbation states.

Recursive tiling showed uniform suppression rather than structured micro-variation.

The TDAL snapshot exhibited a flat response dominated by background instability rather than localized drift energy.

At first glance, this appeared to be a failed experiment. However, it produced a critical insight: baseline instability can dominate the signal space to the extent that meaningful comparison becomes impossible, even when the scene appears visually unchanged.

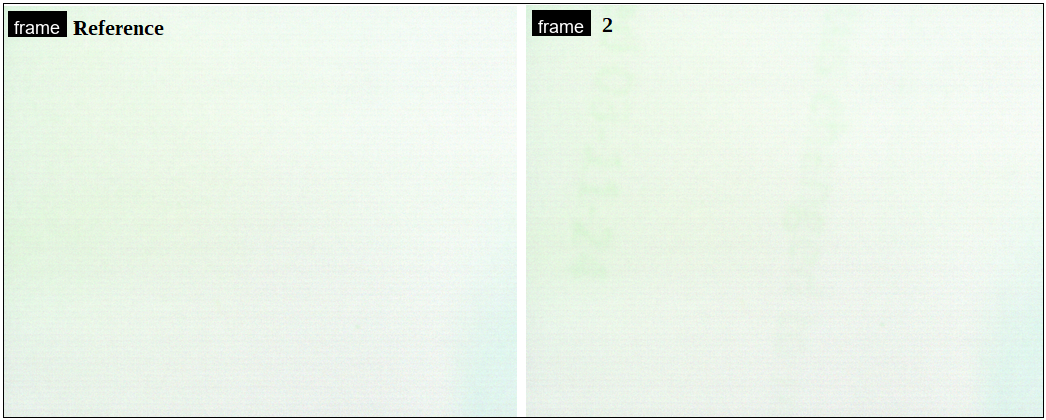

Figure 3 presents a side-by-side comparison between the reference frame and a subsequent frame (frame_0002) captured under nominally identical conditions. Upon close visual inspection, a human observer can perceive subtle differences between the two images, including faint tonal gradients and low-amplitude illumination variation. These differences are real and perceptible, particularly when the images are compared directly.

However, critically, these visually detectable differences do not propagate into the downstream four-panel analytical representations (heatmap, recursive tiling, and TDAL outputs) shown elsewhere in this study. Despite human perception registering a change, the physics-anchored pipeline does not treat the variation as admissible drift.

This outcome highlights an important distinction between perceptual difference and physically meaningful change. Human vision is highly sensitive to relative contrast and contextual comparison, often detecting differences that are transient, non-persistent, or physically unstructured. By contrast, the system evaluates whether a detected variation exhibits sufficient persistence, directional coherence, and physical plausibility to qualify as drift rather than background fluctuation.

In this case, the observed difference fails to satisfy those admissibility criteria. As a result, it is correctly suppressed and does not manifest as elevated drift energy, localized anomalies, or semantic escalation in subsequent representations.

This figure therefore demonstrates a key design principle of the system: the presence of a visible difference is not sufficient to justify semantic interpretation. Only variations that survive physical admissibility filtering—across time, structure, and coherence—are permitted to influence downstream analysis. The system’s refusal to amplify a human-visible but physically weak difference underscores its resistance to false positives and perceptual bias.

The contrast between Figures 1 and 2 reveals a central result of this study:

At extremely low contrast, baseline preparation matters more than the perturbation itself.

In the unstable configuration, ground-zero noise overwhelmed the system’s ability to resolve admissible drift. Rather than producing false positives or averaging the instability away, the pipeline effectively refused to assign meaning. This behavior is not a limitation—it is an intentional safeguard.

Conventional AI or reference-based inspection systems would typically:

Treat both scenes as equivalent,

Smooth over instability through averaging, or

Produce spurious classifications driven by statistical artifacts.

By contrast, the physics-anchored pipeline surfaced the instability itself as the dominant signal and withheld semantic interpretation.

These results establish several prerequisites for semantic emergence in physics-anchored systems:

Baseline convergence must precede comparison

Semantic layering cannot be evaluated until physical invariance is

established.

Ground-zero noise must be characterized, not ignored

What appears visually negligible can be structurally decisive at low

drift amplitudes.

Failure modes are diagnostic

A flat or suppressed response under unstable conditions is evidence

of correct system behavior, not insufficiency.

Meaning is staged, not inferred

Semantic interpretation arises only after persistent, directional

drift survives admissibility filtering.

Although simple in construction, the white paper experiment exposed a boundary condition rarely documented in inspection or AI literature: the transition from physical admissibility to semantic eligibility.

The most valuable outcome did not come from the clean reference case alone, but from the contrast with an improperly prepared baseline. Together, these results demonstrate that semantic systems grounded in physics must first solve the problem of physical stability—and must be allowed to reject interpretation when that stability is absent.

This finding directly informs subsequent experimental design and provides empirical justification for staged semantic pipelines, where meaning is earned rather than assumed.

Author: Stephen Francis

Affiliation: Phocoustic, Inc.

Date: March 1, 2026

Thin films deposited on textured surfaces often produce distributed spectral redistribution without generating visually discernible boundaries or geometric discontinuities. Conventional vision-based inspection systems, which rely on edge contrast or predefined defect morphology, may struggle to detect such low-contrast, non-object-level perturbations.

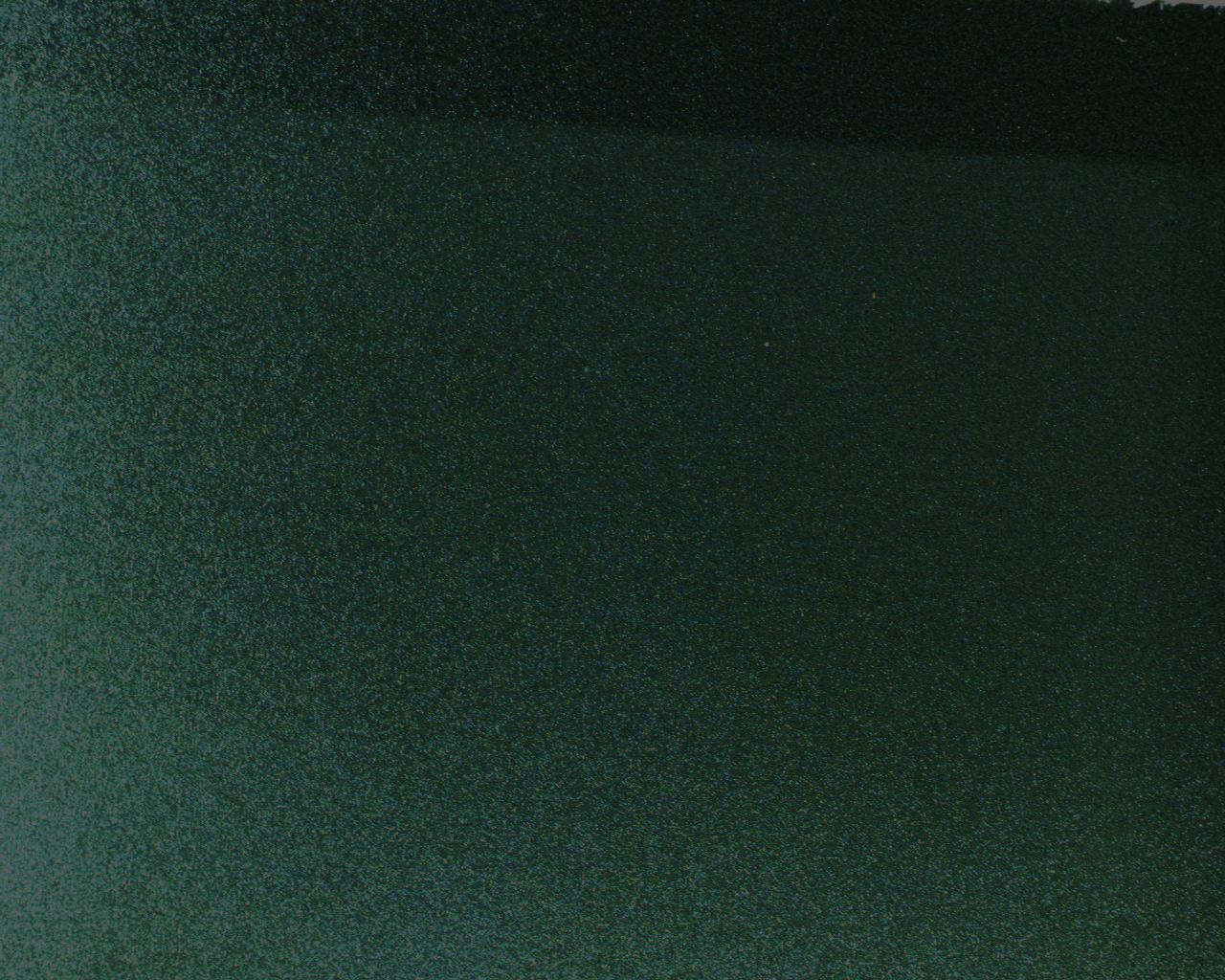

This study presents a physics-anchored drift framework for quantifying visually imperceptible thin-film deposition relative to a defined reference state. A controlled experiment was conducted using a matte polymer substrate under fixed darkfield illumination with all adaptive camera functions disabled. A baseline (Golden) image and a detect image containing a thin alcohol film were captured under identical acquisition parameters.

Although the two regions were visually indistinguishable—even under brightness-enhanced inspection—quantitative drift metrics revealed measurable distributed deviation. Baseline metrics remained at zero (drift_mean = 0), while the thin-film condition exhibited elevated distributed activation (drift_mean = 58.79; padr_dist_score = 59.02) without object-level structural emergence (strt_Lcc = 0.0103).

These results demonstrate reference-anchored detection of distributed conformance loss without reliance on machine learning or probabilistic inference. The findings support drift-based State Conformance measurement as a viable framework for detecting sub-perceptual surface perturbations under controlled acquisition conditions.

Thin films present a unique challenge in surface inspection. Unlike cracks, scratches, or geometric defects, thin films:

Do not necessarily form visible boundaries.

Do not produce strong edge gradients.

Often manifest as distributed micro-scattering changes.

May remain below human perceptual thresholds.

Traditional computer vision systems rely heavily on:

Edge detection.

Intensity thresholding.

Machine learning classification trained on labeled defect examples.

Such approaches may fail when perturbations are distributed rather than localized.

The objective of this study is to evaluate whether a deterministic, physics-anchored drift framework can:

Detect thin-film deposition that is visually indistinguishable from baseline.

Quantify deviation relative to a defined physical reference.

Distinguish distributed haze from object-level defect emergence.

Operate without reliance on machine learning training.

All image acquisition was performed using a fixed camera geometry under controlled darkfield illumination. The imaging configuration was optimized for quantitative drift measurement rather than aesthetic visualization.

The camera position, working distance, focus, and illumination geometry were mechanically fixed and not altered between baseline (Golden) and detect (thin-film) captures. The substrate remained stationary throughout the experiment to preserve pixel-level spatial correspondence.

Images were acquired in native sensor output format without post-capture normalization, histogram equalization, or adaptive contrast adjustment prior to drift computation. Brightness-enhanced figures included in this paper are presentation-only renderings and were not used in any quantitative analysis.

To ensure deterministic measurement integrity, all adaptive camera functions within the ICentral acquisition software were explicitly disabled prior to image capture. The following automatic adjustments were disabled:

Auto exposure

Auto gain

Auto white balance

Auto contrast normalization

Auto gamma correction

Any adaptive sharpening or dynamic enhancement functions

All acquisition parameters were manually configured and held constant for both Golden and Detect captures.

Brightness and raw gain were intentionally set conservatively to preserve dynamic range and avoid saturation. This configuration may produce images that appear visually dark; however, it ensures that pixel intensity values remain within a linear operating regime and that measured variation reflects physical surface change rather than camera adaptation.

No parameter was altered between baseline and detect conditions. The presence of the thin film was the only experimental variable.

Drift computation was performed directly on raw pixel intensity values captured under fixed acquisition parameters.

Let:

I_ref(x, y) represent baseline intensity

I_detect(x, y) represent detect intensity

Drift magnitude is defined as:

D(x, y) = |I_detect(x, y) − I_ref(x, y)|

All drift metrics (drift_mean, drift_p95, drift_max, padr_dist_score, strt_S, strt_Lcc) were computed exclusively from these raw intensity differences.

No gamma correction, tone mapping, or nonlinear scaling was applied prior to computation. Visualization scaling shown in figures was applied only for presentation clarity and was not used in analysis.

Under the selected exposure and gain parameters, the imaging sensor is assumed to operate within a linear response regime. Conservative brightness and gain settings were chosen to avoid:

Saturation

Clipping

Nonlinear amplification

Auto-compensation artifacts

Because all adaptive features were disabled and illumination was fixed, pixel intensity variation is assumed to be linearly proportional to reflectance variation within the ROI.

Therefore, measured drift values reflect proportional physical changes in surface micro-scattering response rather than camera-induced normalization.

An identical pixel-coordinate ROI was extracted from both Golden and Detect frames. The ROI was defined prior to quantitative analysis and applied symmetrically without modification.

This eliminates:

Cropping asymmetry

Spatial selection bias

Algorithmic region scanning effects

All drift metrics were computed exclusively within this fixed ROI.

Drift-based metrics were computed directly from raw image captures. No brightness enhancement, visualization scaling, or image adjustment was applied prior to metric computation.

Brightness-enhanced images included in this paper are presentation-only renderings and were not used in any quantitative analysis.

This ensures that reported metrics reflect physically captured optical response rather than post-processing artifacts.

Accurate quantification of thin-film deposition requires controlled spatial comparison between a defined reference state and a detect state. In distributed perturbation scenarios—such as thin-film haze—full-frame analysis may dilute subtle deviations by incorporating unaffected regions. For this reason, a fixed Region of Interest (ROI) was defined and analyzed identically across both Golden (baseline) and Detect (thin-film) captures.

The ROI serves as the bounded spatial domain within which deterministic drift quantification is performed. It was manually defined prior to analysis and applied symmetrically to both frames without modification. The selected region satisfies the following constraints:

Identical pixel coordinates in both reference and detect images

Fixed optical and geometric acquisition conditions